3D+Texture garment reconstruction (NeurIPS'20)

Dataset description

Overview

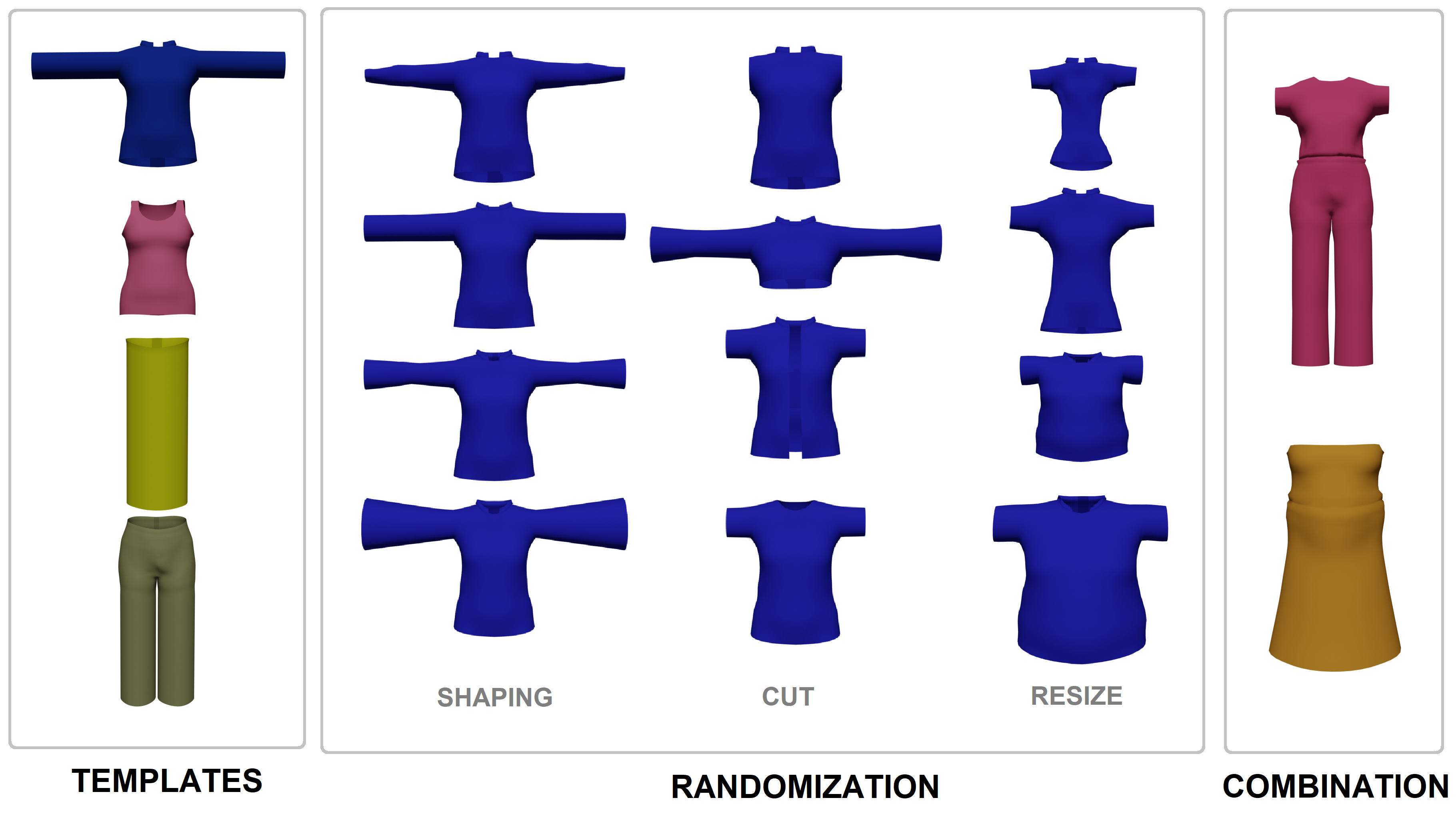

The dataset is an extension of CLOTH3D dataset including 3D garments, texture data and RGB rendering. The dataset contains more than 2M frames (8K+ sequences) of simulated and rendered garments in 7 categories shown in Figure 1: Tshirt, shirt, top, trousers, skirt, jumpsuit and dress.

Figure 1. Unique outfit generation pipeline.

Garments are simulated on top of SMPL model using MoCap data processed into SMPL params. Garments may have between 3.7K-17.2K vertices with variable fabric, shape, tightness and topology. Therefore, each garment can show a different dynamic on top of a fixed pose sequence. Renderings are performed with Cycles engine (Blender) with multiple light sources to simulate indoor/outdoor perceptions, and different texture patterns or plain color. See Figure 2.

Figure 2. Samples of lighting, fabrics and texture patterns.

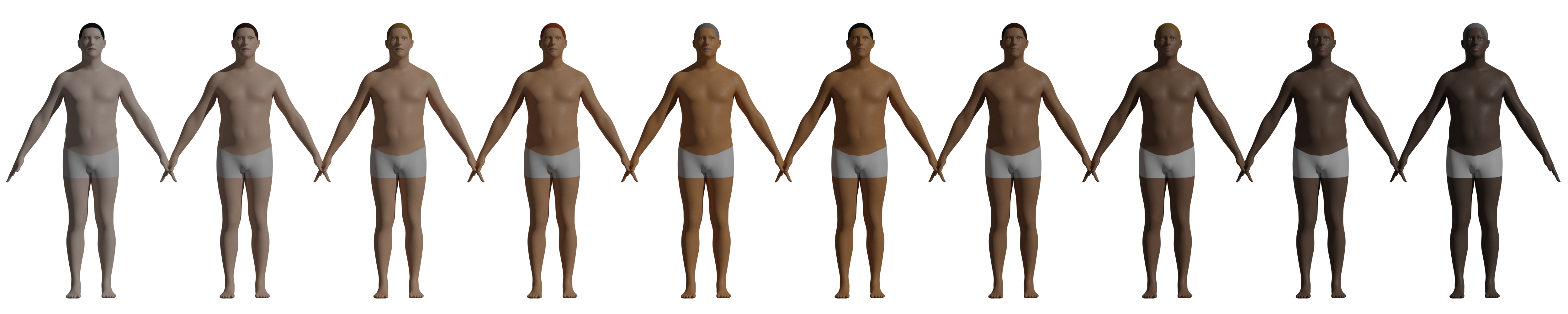

Also, for extra realism and unbiasedness, human skin, hair and eyes are also provided with random tones. See Figure 3.

Figure 3. Different skin tones to have unbiassed ethnicity.

Finally, simulations are performed in a sequence of poses to obtain a dynamic garment. See Figure 4. The rendering is done at a resolution of 640x480 RGB images with transparent background. Then each sequence is compressed in a video.

Figure 4. Simulated garments in a sequence.

Metadata

Human metadata

Subjects are based on SMPL. This metadata has been used for the generation of the 3D human models.

- pose: SMPL pose parameters for each frame of the sequence (72, #frames),

- shape: SMPL shape parameters (10,),

- gender: subject's gender: 0: female, 1: male,

- trans: SMPL root joint location (3, #frames). Root joint is first aligned at (0,0,0) and later moved to its corresponding location. NOTE: SMPL does not align root joint at origin by default.

Garment metadata

Before explaining the metadata, it is important to know that there are four different garment fabrics: Cotton, Silk, Denim and Leather.

- outfit: description of the subject's garment(s),

- tightness: two-dimensional array that describes garment tightness. For details, we refer to CLOTH3D paper, Sec.3.2.

Outfit description consists of a dictionary where each key is the garment type and its value contains garment metadata structured as follows:

- fabric: type of fabric used for the given garment. This has an impact in cloth simulation and rendering.

- texture:

- type: 'color' or 'pattern'. Plain RGB color or a color pattern. In case of a color pattern, the sample folder will contain a PNG file named as '[GARMENT TYPE].png',

- data: RGB color. Only if texture type is 'color'.

World metadata

This information describes the setup under which samples had been rendered into RGB images.

- zrot: both human models and garments are rotated a random angle (in radians) around Z-axis,

- camLoc: camera location. Remains static for a given sequence (3,),

- lights: type of lights and its configuration.

Two kinds of lights had been used during the rendering. 'sun' to simulate outdoors scenarios and 'point' to simulate indoors scenarios. The structure of the light metadata is as follows:

- type: 'sun' or 'point',

- data:

- pwr: an scalar to describe the light power (Blender units).

- rot: Three-dimensional array describing light orientation in space. Each component represents the angle (in radians) of rotation in each axis. Only for 'sun'.

- loc: Location of the light in space. Only for 'point'.

NOTE: a given sequence can have multiple 'point' type lights. If this is the case, 'data' shall be a list where each element is a dictionary with the structure detailed above.

NOTE2: the rest pose for static garments (in <garments_name>.obj files) is defined as follows.

pose = np.zeros((24,3), np.float32)

pose[1,2] = 0.15

pose[2,2] = -0.15

Also root joint has an additional z-axis rotation defined in info.mat file as zrot variable. As stated in the starterkit demo, You can use 'zRotMatrix' function in DataReader/util.py to compute rotation matrix.

Data structure

Dataset folder has the following structure.

- <sequence 1>

- <garment 1>.pc16 <======== a file to store vertex locations for the whole sequence

- <garment 1>.png <======== texture image. In case of a plain color we do not save the texture image. Then the color is available in the metadata.

- <garment 1>.obj <======== stores vertices and faces for <garment 1> in a rest pose and its UV map.

- <garment 2>.pc16

- <garment 2>.png

- <garment 2>.obj

- <sequence 1>.mkv <======== rendered images compressed in a video

- <sequence 1>_segm.mkv <======== a video containing body and garment silhouette

- info.mat <======== metadata and ground truth information

- <sequence 2>

- ....

"garment j" name is the garment type from the set {Top, Tshirt, Trousers, Skirt, Jumpsuit, Dress}. Each sequence may have 1 or 2 garment per outfit. As can be seen, garment topology, #vertices, testure and UV map are fixed in the whole sequence. Each sequence may have up to 300 frames.

Starting kit

We provide a starting kit to read, write and visualize the data here.

Download

To access the links to the data, you must register in the ChaLearn page. See below.

- Train: https://chalearnlap.cvc.uab.cat/dataset/38/data/72/files/

- Validation: https://chalearnlap.cvc.uab.cat/dataset/38/data/73/files/

- Test: https://chalearnlap.cvc.uab.cat/dataset/38/data/74/files/

Cite

@inproceedings{madadi2021cloth3d++,

title={Learning Cloth Dynamics: 3D + Texture Garment Reconstruction Benchmark},

author={Madadi, Meysam and Bertiche, Hugo and Bouzouita, Wafa and Guyon, Isabelle and Escalera, Sergio},

booktitle={Proceedings of the NeurIPS 2020 Competition and Demonstration Track, PMLR},

volume={133},

pages={57--76},

year={2021}

}

@inproceedings{bertiche2020cloth3d,

title={CLOTH3D: Clothed 3D Humans},

author={Bertiche, Hugo and Madadi, Meysam and Escalera, Sergio},

booktitle={European Conference on Computer Vision},

pages={344--359},

year={2020},

organization={Springer}

}

News

There are no news registered in 3D+Texture garment reconstruction (NeurIPS'20)