2020 NeurIPS competition: 3D+Texture garment reconstruction

Challenge description

Overview

Humans are important targets in many applications. Accurately tracking, capturing, reconstructing and animating the human body, face and garments in 3D are critical for human-computer interaction, gaming, special effects and virtual reality. In the past, this has required extensive manual animation. Regardless of the advances in human body and face reconstruction, still modeling, learning and analyzing human dynamics need further attention. In this competition we plan to push the research in this direction, e.g. understanding human dynamics in 2D and 3D, with special attention to garments. We provide a large-scale dataset (more than 2M frames) of animated garments with variable topology and type. The dataset contains paired RGB images with 3D garment vertices in a sequence. We paid special care to garment dynamics and realistic rendering of RGB data, including lighting, fabric type and texture. We designed three tracks so participants can compete to develop the best method to perform 3D garment reconstruction and texture estimation in a sequence from (1) 3D garments, and (2) RGB images. From this competition, we expect participants to propose new models for learning garments dynamics.

Dataset

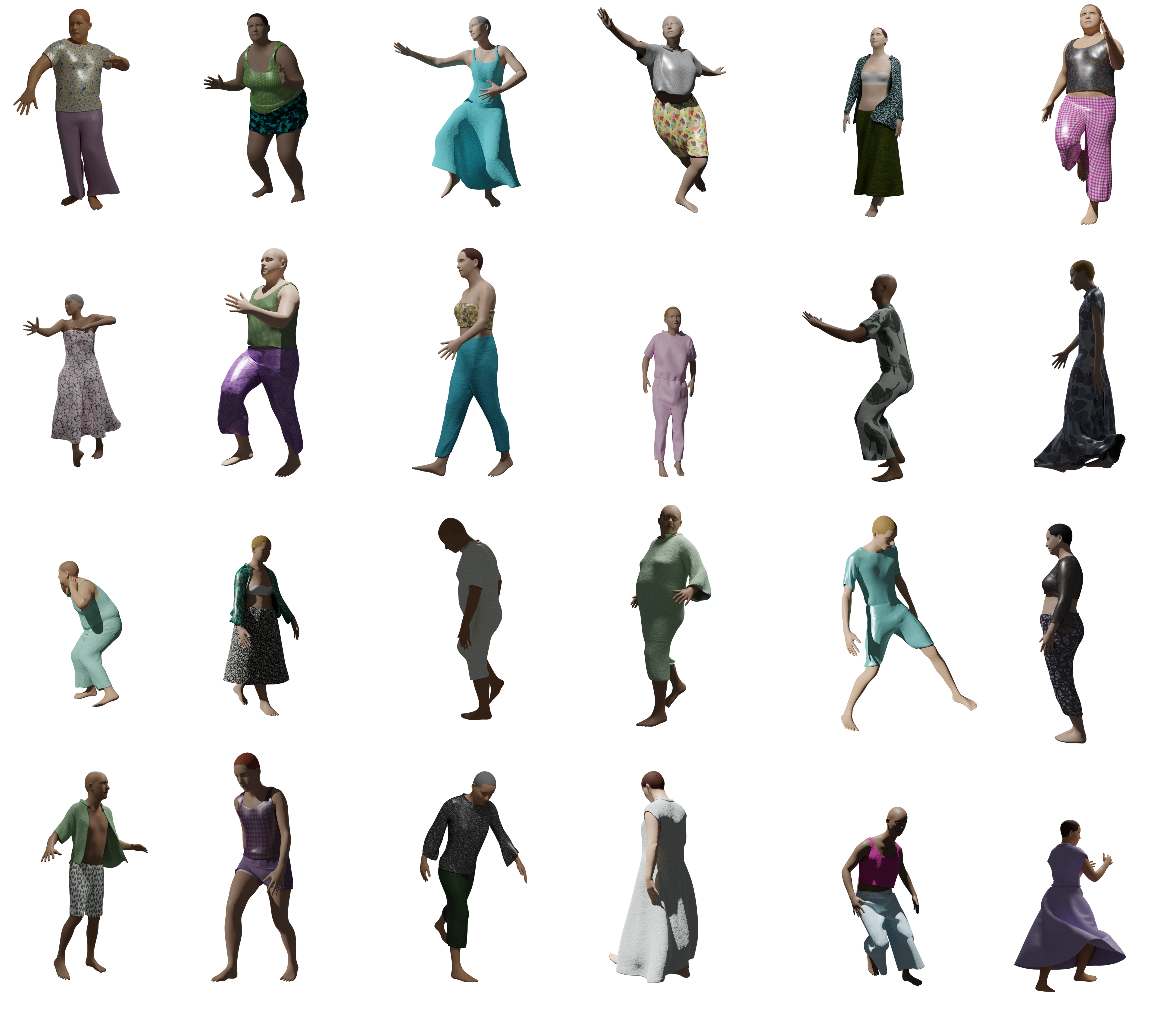

The dataset is an extension of CLOTH3D dataset including 3D garments, texture data and RGB rendering. The dataset contains more than 2M frames (8K+ sequences) of simulated and rendered garments in 7 categories: Tshirt, shirt, top, trousers, skirt, jumpsuit and dress. Garments are simulated on top of SMPL model using MoCap data processed into SMPL params. Garments may have between 3.7K-17.2K vertices with variable fabric, shape, tightness and topology. Therefore, each garment can show a different dynamic on top of a fixed pose sequence. Renderings are performed with Cycles engine (Blender) with multiple light sources to simulate indoor/outdoor perceptions, and different texture patterns or plain color. Finally, for extra realism and unbiasedness, human skin, hair and eyes are also provided with random tones. Figure 1 shows some example frames. More information about the dataset is provided here.

Figure 1. Some sample frames of the dataset

Tasks

The competition will contain three tracks, each runs in two phases (development and test):

- 3D-to-3D garment reconstruction: In this task participants must train their models on 3D data including a 3D garment in rest pose, body shape and a sequence of body pose. The goal of this task is to learn garment dynamics to build generative models for 3D reconstruction. Enter the competition here.

- Image-to-3D garment: In this task participants can make benefit of the previously learned task to build their generators on top of features they extract from images. This is a more challenging task since the models must be able to deal with lighting, occlusions, viewpoint, etc. Enter the competition here.

- Image-to-3D garment and texture reconstruction: In this task trained models must be able to predict a full 3D model + texture. The texture must be a pattern image and a UV map. Enter the competition here.

Although we provide a ground truth grid topology per 3D garment in our dataset, participants have freedom in their model design and they can generate any topology they find more suitable for the data. A triangulated mesh along with 3D vertex locations are needed to evaluate the model.

Metrics and ranking strategy

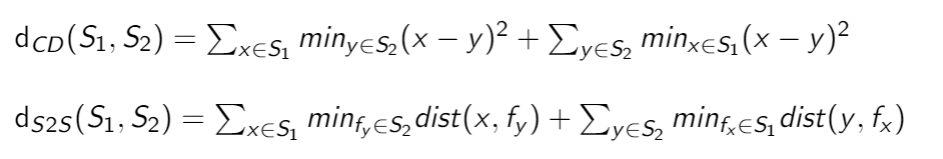

Chamfer distance (CD) is a common metric to compute similarity of two point clouds. However, it does not take surface into account and may produce non-zero error for perfect estimations. Instead we use an extension of CD where the distance is computed based on the nearest face rather than nearest vertex. We call this metric surface-to-surface (S2S) distance and use it to compute the rank of participants for reconstructed 3D garments (tracks 1 and 2). For 3D vertices x and y and triangulated faces fx and fy belonging to surfaces S1 and S2 , respectively, CD and S2S distances are computed as following.

In the 3rd track we measure the quality of the full reconstructed model. We measure this score through qualitative evaluation done by several human judges. Firstly, we rank participants based on S2S metric and top 10 teams are considered in the qualitative measurements. Then, we reconstruct the full garment model (3D+texture) for a number of samples and ask the judges to answer the following questions by comparing teams in a pairwise manner.

- Which team performs better in terms of realistic garment dynamics in the sequence?

- Which team looks more similar to the groundtruth in terms of garment type and 3D reconstruction?

- Which team performs better in terms of realistic texture pattern? A team must be penalized if always generate the same texture pattern or a plain color.

- Which team looks more similar to the groundtruth in terms of texture pattern and color?

This will result to a tensor with size (#Participants, #Participants, #Questions, #Judges). The final score for each participant will be the average over the last three dimensions. We rank participants based on this score. Note that this score is done just once at the end of the competition and not shown in the leaderboard. However, we show S2S score on the leaderboard during the development phase. Also, judges will not take into account human skin or hair color for their decisions.

How to enter the competition?

The competition will be run on CodaLab platform. Participants will need to register through the platform, where they will be able to access the data and submit their predicitions on the validation and test data (i.e., development and test phases) and to obtain real-time feedback on the leaderboard. The development and test phases will open/close automatically based on the defined schedule.

Starting kit

We provide a starting kit along with this competition. The starting kit contains a few sample data and necessary functions and instructions to read, write, visualize and evaluate data. Participants can run a python notebook demo file to make familiar with data structure. Please note that it is necessary to use provided functions to write predictions into submission files to avoid failures in submissions. You can download the starting kit here.

Making a submission

Each sequence in the dataset is presented in a folder. You must follow the same folder structure as given in the dataset to save your predicted results. To submit your predicted results (on each of the tracks and phases), you first have to compress your results from the root folder without including the root folder itself in the zip file (please, keep the filename as it is) as "the_filename_you_want.zip". Then, sign in on Codalab -> go to our challenge webpage on codalab -> go on the "Participate" tab -> "Submit / view results" -> "Submit" -> then select your "the_filename_you_want.zip" file and -> submit.

Warning: the last step ("submit") may take from few minutes to hours depending on your internet speed (just wait). When uploading is complete, the file is sent to the backend workers for evaluation. If everything goes fine, you will see the obtained results on the leaderboard ("Results" tab). This process may take from 1 hour to several hours depending on the accuracy of the predictions and the queue length in the backend due to multiple submissions. Note, Codalab will keep on the leaderboard the last valid submission. This helps participants to receive real-time feedback on the submitted files. Participants are responsible to upload the file they believe will rank them in a better position as a last and valid submission.

Post-challenge

Important dates regarding code submission and fact sheets are provided here.

- Code verification: After the end of the test phase, top participants are required to share with the organizers the source code used to generate the submitted results, with detailed and complete instructions (and requirements) so that the results can be reproduced locally. Note, only solutions that pass the code verification stage are elegible for the prizes and to be announced in the final list of winning solutions. Note, participants are required to share both training and prediction code with pre-trained model so that organizers can run it at only test stage if they need.

- Fact sheets: In addition to the source code, participants are required to share with the organizers a detailed scientific and technical description of the proposed approach using the template of the fact sheets provided by the organizers. LaTeX template of the fact sheets can be downloaded here (link will be provided soon).

Basic rules

According to the Terms and Conditions of the Challenge,

- "in order to be eligible for prizes, top ranked participants’ score must improve the baseline performance provided by the challenge organizers;" that is, lower S2S and higher qualitative scores.

- Participants who work together on the same methodology are considered as a team. It is responsibility of participants to declare their team in the codalab. Each team is allowed to perform maximum 1 submissions per day.

- Participants are not allowed to use any other data than the provided dataset for the purpose of 3D reconstruction. However, intelligent data augmentation is allowed.

- "the performances on test data will be verified after the end of the challenge during a code verification stage. Only submissions that pass the code verification will be considered to be in the final list of winning methods;"

- "to be part of the final ranking the participants will be asked to fill out a survey (fact sheet) where a detailed and technical information about the developed approach is provided."

- Organizers have the right to update the rules in unknown situations (e.g. a tie among participants ranking) or lead the competition in a way that best suits the goals.

- Researchers from organizers' and sponsors' institutions are not considered in the final ranking and not eligible for the prize.

- The dataset can not be used for commercial purposes.

Prize

We will provide travel grants to the top 3 performing methods of each track. The grant will be paid after attendance of participants to the conference. A proof of attendance is required for the grant payment, i.e. a copy of the flight ticket and conference badge. The exact amount of grants will be announced later upon the final confirmation of sponsors. We also provide one NVIDIA GPU for the best student approach sponsored by NVIDIA. This will be evaluated by competition organizers considering all top winning solutions from all 3 tracks as possible winning candidates.

We also invite participants to write a joint paper with organizers. We plan to submit this paper to a relevant top ranking conference. Co-author participants are selected based on the quality of factsheets, team ranks and total number of teams.

The list of sponsors can be seen here:

- Chalearn,

- Nvidia,

- Facebook Reality Labs,

- Baidu. Prof. Guodong Guo, West Virginia University, is sponsoring on behalf of Baidu.

News

There are no news registered in